In the my previous article “Cognitive Traps” we explored our decision dilemma through the eyes of Nobel Prize recipient Daniel Kahneman (“Thinking: Fast and Slow”) He demonstrates quite clearly humans are by design “predictably irrational” when solving complex problems. We all possess two interconnected but competing brains in one cranium. The more primitive but extremely fast, massively parallel brain works largely on heuristics or “rules of thumb” developed to quickly solve relatively simple and repetitive everyday decisions. Kahneman calls this “system one” thinking. The more recently evolved, sequential (but relatively slow) pre-frontal cortex (PFC) is the meticulous and objective auditor of our actions and home to our “higher order thinking skills” (HOTS). This brain system deciphers unique or complex situations (Kahneman’s “system two” thinking) and plans complex cognitive behaviors. The PFC also provides our personality and is the arbiter of appropriate social behavior. Key piloting skills like “situational awareness”, planning and judgement reside here.

Without our automatic, low bandwidth “system one” heuristics we could not perform complex motor skills like flying. If every action required careful, conscious decision-making we would not get out of the starting blocks. Typically, a student pilot cannot solo until they have developed their basic control skills to an “appropriate automatic” level because they would be too busy making slow deliberate decisions for every single action to function effectively. Most basic flying decisions have to be fast, automatic and appropriate to be useful (and safe). Imagine coming down final and encountering unannounced wind shear. Slow, deliberate decision-making will not work here. We must react with a correct trained response.

Without our automatic, low bandwidth “system one” heuristics we could not perform complex motor skills like flying. If every action required careful, conscious decision-making we would not get out of the starting blocks. Typically, a student pilot cannot solo until they have developed their basic control skills to an “appropriate automatic” level because they would be too busy making slow deliberate decisions for every single action to function effectively. Most basic flying decisions have to be fast, automatic and appropriate to be useful (and safe). Imagine coming down final and encountering unannounced wind shear. Slow, deliberate decision-making will not work here. We must react with a correct trained response.

Trouble in life occurs when inappropriate heuristics are applied to novel or changing situations. Imagine you learned to drive in California and only much later encountered your first icy roads in the northeast. Your skills and automatic decisions would lead to disaster (unless overridden by the “system two” auditor). As an instructor I see this commonly with new students trying to steer a plane back onto the runway centerline on the ground utilizing the yoke. (“Oops, though I was driving a car!”) Another problem occurs when “system one” thinking is entirely in charge of the operation without the HOTS to audit and correct the process. I think we all experienced the “I do not know what I was thinking” moments or arrived somewhere in our car with no memory of the journey? Obviously no way to fly a plane but a good example of how thoroughly we can operate “on automatic.” These experiences are often the result of cognitive stress (I-M-S-A-F-E) that can often hijack the more nuanced PFC. During high workload or diminished capability the reptilian action/reaction “system one” processor takes over. It has often been said that the real experience of being fully “human” involves putting some space between stimulus and response for a more nuanced life experience. Buddhist monks exist entirely in the pre-frontal cortex (but apparently do not fly planes).

“System one” thinking is tricky (think card shark at the poker table) It operates largely out of sight of the “system two” conscious auditor. Imagine we successfully navigate lousy, lower than acceptable weather to get home and arrive safely. This successful outcome validates the poor decision (reinforcement) even though luck might be the important ingredient in this success , not skill! We now have a new (lower) standard of “acceptable” pilot behavior. This becomes the “new normal” without any conscious decision and risk creeps into our operation surreptitiously. Additionally, system one decisions are by structure only “good enough” or what psychologists call “satificing” rather than optimizing. Speed is of the essence so quality often suffers.

Unfortunately, though these two systems are largely separate, the deliberate, thoughtful “system two” brain is still largely influenced by “system one” filtered sensory input. Dr. Carl Spetzler from The Strategic Decision Group points out “Use of the deliberative brain is so much slower that the intuitive brain has already made some judgments (first impressions) before we even start conscious deliberation. Even during careful deliberations, we take short cuts and reason by association rather than by correct inductive or deductive reasoning. We have many distortions in both brains that make us ‘predictably irrational.’ ” And if you think your teenager lacks this this kind of thoughtful decision making and risk management you are right. Our PFC structure is not fully formed until the age of 25. Knowing when and how to balance and apply these two thinking systems may help achieve a higher level of safety in aviation. This skill of tuning up your mental processes in real time is the highly regarded skill called “metacognition.”

Unfortunately, though these two systems are largely separate, the deliberate, thoughtful “system two” brain is still largely influenced by “system one” filtered sensory input. Dr. Carl Spetzler from The Strategic Decision Group points out “Use of the deliberative brain is so much slower that the intuitive brain has already made some judgments (first impressions) before we even start conscious deliberation. Even during careful deliberations, we take short cuts and reason by association rather than by correct inductive or deductive reasoning. We have many distortions in both brains that make us ‘predictably irrational.’ ” And if you think your teenager lacks this this kind of thoughtful decision making and risk management you are right. Our PFC structure is not fully formed until the age of 25. Knowing when and how to balance and apply these two thinking systems may help achieve a higher level of safety in aviation. This skill of tuning up your mental processes in real time is the highly regarded skill called “metacognition.”

So here is a POH to consciously operate your aviation brain:

First, as pilots, we must make sure our system one heuristics are accurate and current. Heuristics are skill sets and “rules of thumb” that run our flying in fast-changing situations. On that final approach when we experience a sudden wind shear, we must react automatically. This reaction must be appropriate, accurate and immediate to save the day. Frequently, “instinct” is all wrong and only recent skill practice and correct assembly of this skill system will result in a safe arrival. The example of inappropriately applying yoke pressure (driving) on the runway is only one egregious example. These people are automatically applying an incorrect heuristic and they do not get the result they anticipate. To be safe we must train all the correct skills with some frequency. Practice makes permanent (but not necessarily “perfect”) and we must have and apply the correct “toolkit” to each situation. Think perhaps of “programming your own system” for success in this area. And if you do not practice frequently, you do not have the correct tools to do the job when the need arises and you won’t be safe.

Second, we must continually and carefully filter our flying heuristics for poor decisions (coding errors?) that sneak into our system and become automatically embedded. This process is illustrated by the weather example above: erroneously reinforcing our poor decisions based entirely on lucky outcomes. Another example is internalizing untested information discovered on the internet! It is essential to take the time and effort to objectively and honestly evaluate our decisions and performance against against an industry-accepted standard. A safe pilot must commit rigorous self-analysis with proper humility. Listening to the advice and counsel of respected counselors is essential for safety.

A third important consideration is avoiding stressors like the I-M-S-A-F-E items we studied in aviation training and FAA Safety Seminars. (and now you know why!) “System two” higher order thinking skills get short-circuited when we are stressed and instead of thinking our way out of a complex situation carefully we react automatically. These stress factors may cause us to entirely miss important “decision points” due to fatigue or complacency. And though we engage the brain fully, be careful as Dr. Spretzler counsels: “Just becoming deliberative is no guarantee of making a quality choice. So for the most part, we make good’nuff decisions.”

A third important consideration is avoiding stressors like the I-M-S-A-F-E items we studied in aviation training and FAA Safety Seminars. (and now you know why!) “System two” higher order thinking skills get short-circuited when we are stressed and instead of thinking our way out of a complex situation carefully we react automatically. These stress factors may cause us to entirely miss important “decision points” due to fatigue or complacency. And though we engage the brain fully, be careful as Dr. Spretzler counsels: “Just becoming deliberative is no guarantee of making a quality choice. So for the most part, we make good’nuff decisions.”

A fourth suggestion is committing to standard operating procedures and clear checklist usage to provide an environment where abnormal or unusual events stand out clearly. Standardization gives us a greater opportunity to discover and manage decisions in a more controlled environment with proper tools. Avoiding surprises and controlling the variables should be a basic goal in aviation. Critical to proper functioning is also creating enough time in your planning and piloting operations to deal with the inevitable surprises in a thoughtful manner. When we “put ourselves under the gun” and require immediate solutions (our brain on adrenaline) we short-circuit the thoughtful, objective solutions and again beg for a “system one” solution.

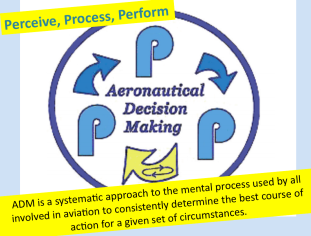

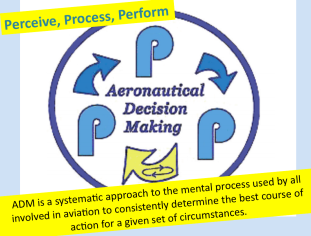

Lastly, an ongoing active “threat and error management system” is necessary to maintain our guard while piloting. This does not need to be paranoid hyper-vigilance but rather a sustained awareness that actively processes experience and sifts it for changes that might be important. The source of my favorite paradigm is Air Force Colonel John Boyd, the fighter pilot who perfected the energy management theories at the Top Gun School and later developed the F-16 at the Pentagon. His “Observe-Orient-Decide-Act” paradigm is widely accepted in every top business school as the most effective system to meet challenges in a time-limited, stressful and changing environment. This has been recast into “perceive-process and perform” for pilots.

The “perceive” step requires vigilance and active observation. A pilot should be alert for any changes or unusual circumstances incorporating the P-A-V-E elements in dynamic interplay. Next we must “process” or assess the meaning to decide if the detected change is threatening. “Process” also requires the generation of viable alternatives and options that would provide possible viable outcomes if action is required. Finally, the “perform” step requires commitment to the best course of action and aggressively working toward a successful outcome. In addition to action, the “perform” step also requires the evaluation of outcomes as we enter the 3P cycle again . This is the “fast and frugal” decision-making that pilots must embrace to be “surely safe” (as opposed to “maybe safe” where we are counting on luck or an easy ride) Pilots do not sit at a white board and parse decisions academically in a sterile environment after the game. We are usually “in the trenches” in real time and require the best decision with limited time and information. This is called “optimization under constraint” or “fast and frugal decision-making.” More on this soon…fly safely!

The “perceive” step requires vigilance and active observation. A pilot should be alert for any changes or unusual circumstances incorporating the P-A-V-E elements in dynamic interplay. Next we must “process” or assess the meaning to decide if the detected change is threatening. “Process” also requires the generation of viable alternatives and options that would provide possible viable outcomes if action is required. Finally, the “perform” step requires commitment to the best course of action and aggressively working toward a successful outcome. In addition to action, the “perform” step also requires the evaluation of outcomes as we enter the 3P cycle again . This is the “fast and frugal” decision-making that pilots must embrace to be “surely safe” (as opposed to “maybe safe” where we are counting on luck or an easy ride) Pilots do not sit at a white board and parse decisions academically in a sterile environment after the game. We are usually “in the trenches” in real time and require the best decision with limited time and information. This is called “optimization under constraint” or “fast and frugal decision-making.” More on this soon…fly safely!

Without our automatic, low bandwidth “system one” heuristics we could not perform complex motor skills like flying. If every action required careful, conscious decision-making we would not get out of the starting blocks. Typically, a student pilot cannot solo until they have developed their basic control skills to an “appropriate automatic” level because they would be too busy making slow deliberate decisions for every single action to function effectively. Most basic flying decisions have to be fast, automatic and appropriate to be useful (and safe). Imagine coming down final and encountering unannounced wind shear. Slow, deliberate decision-making will not work here. We must react with a correct trained response.

Without our automatic, low bandwidth “system one” heuristics we could not perform complex motor skills like flying. If every action required careful, conscious decision-making we would not get out of the starting blocks. Typically, a student pilot cannot solo until they have developed their basic control skills to an “appropriate automatic” level because they would be too busy making slow deliberate decisions for every single action to function effectively. Most basic flying decisions have to be fast, automatic and appropriate to be useful (and safe). Imagine coming down final and encountering unannounced wind shear. Slow, deliberate decision-making will not work here. We must react with a correct trained response. Unfortunately, though these two systems are largely separate, the deliberate, thoughtful “system two” brain is still largely influenced by “system one” filtered sensory input. Dr. Carl Spetzler from The

Unfortunately, though these two systems are largely separate, the deliberate, thoughtful “system two” brain is still largely influenced by “system one” filtered sensory input. Dr. Carl Spetzler from The